Fake News Detection

My Role

Machine Learning Engineer – End-to-End Pipeline Development

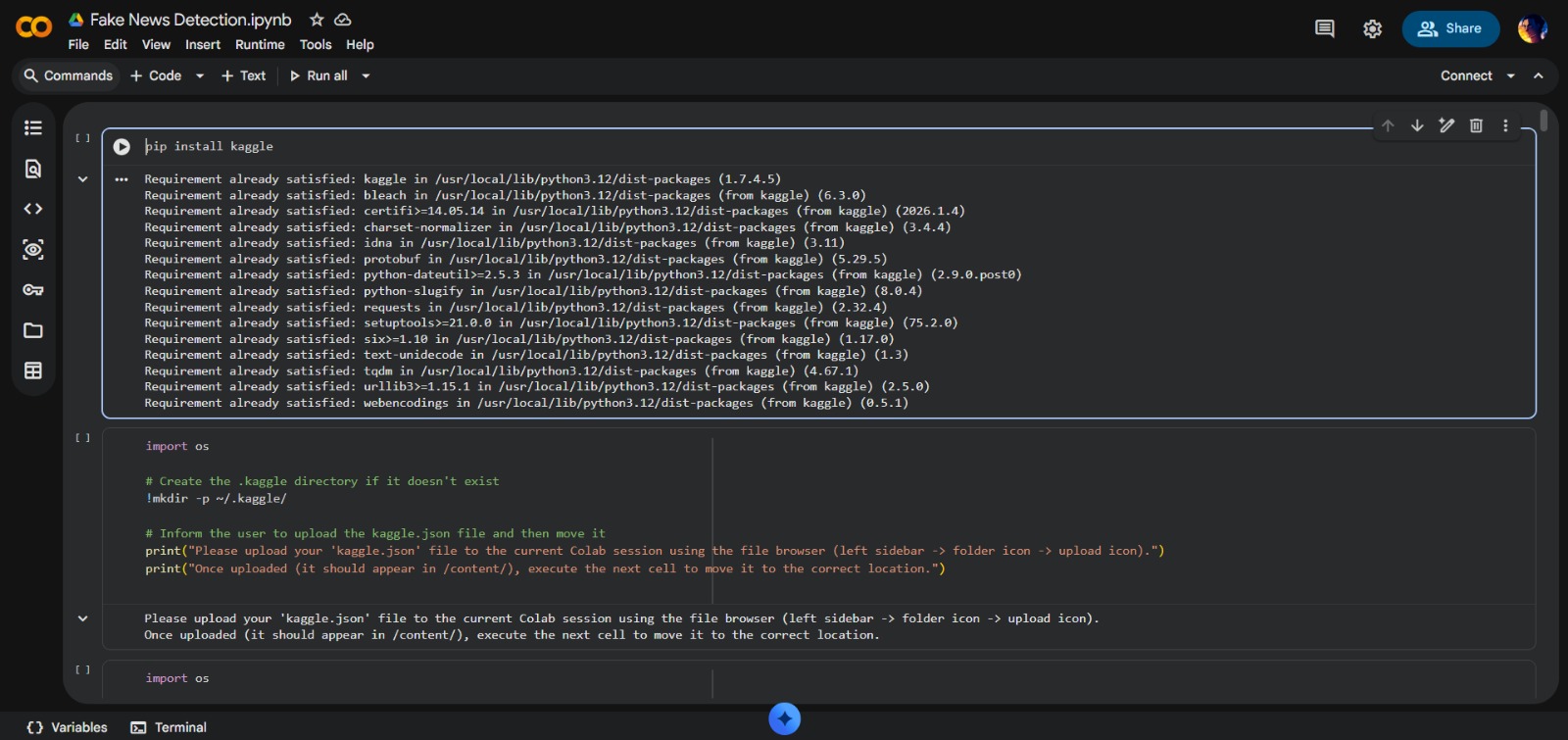

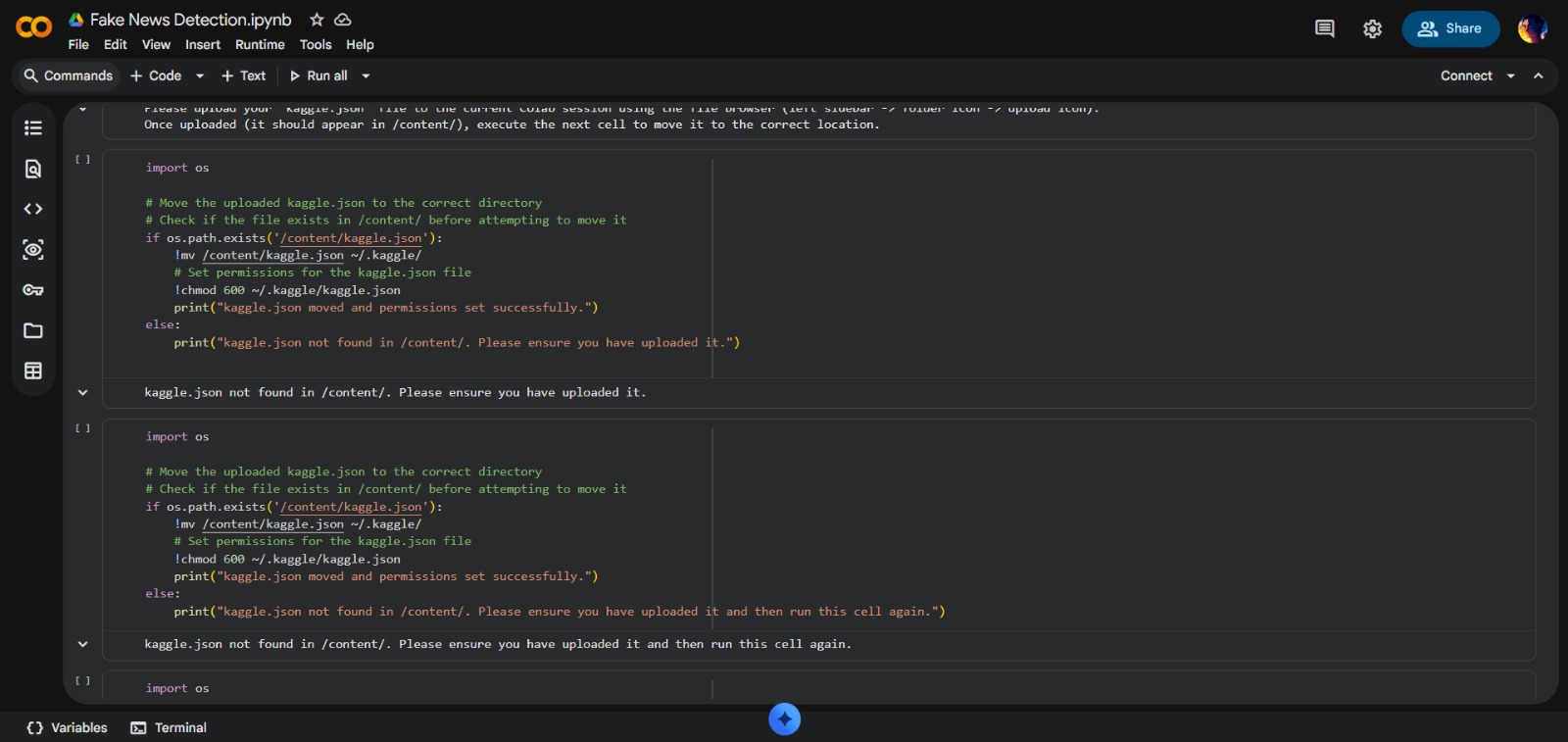

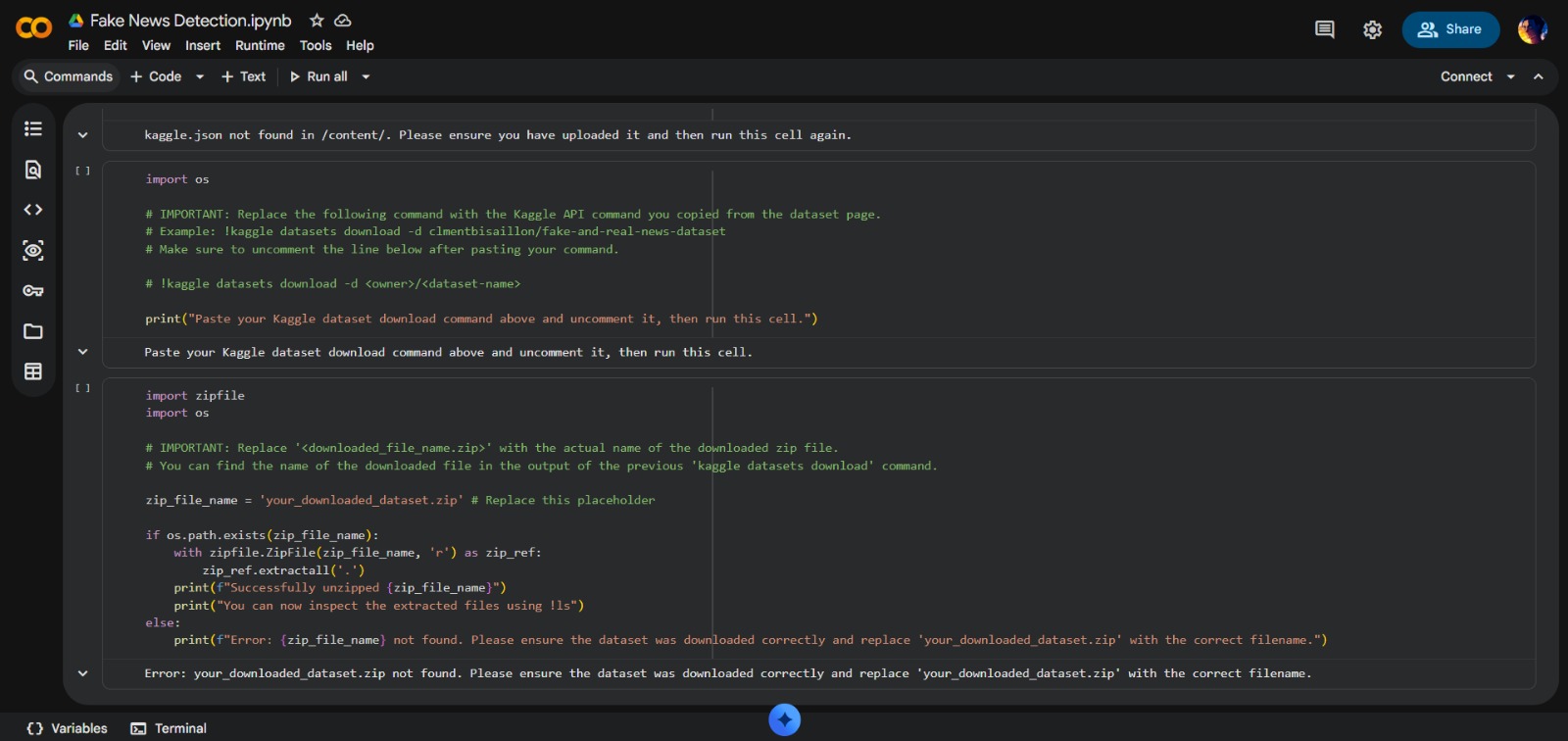

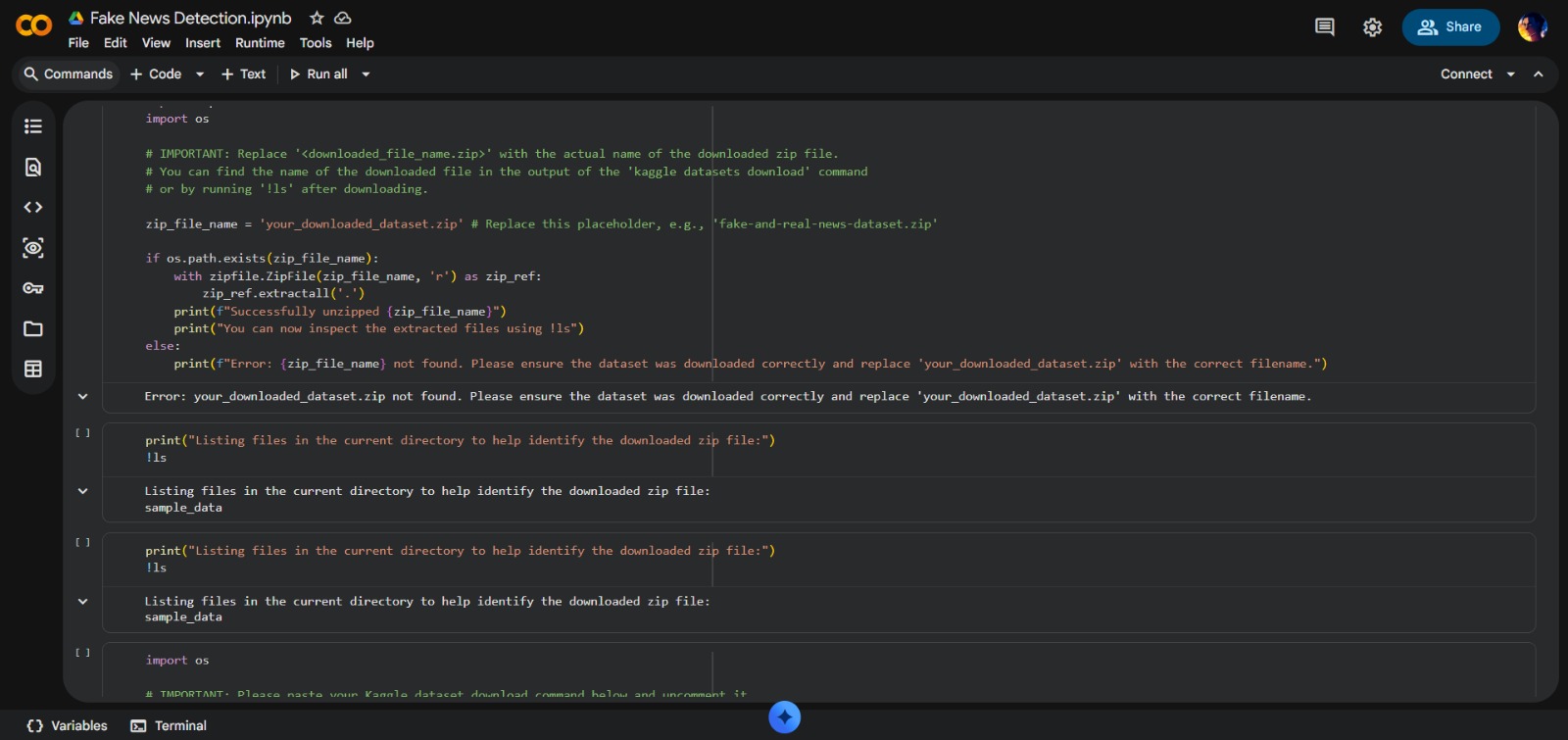

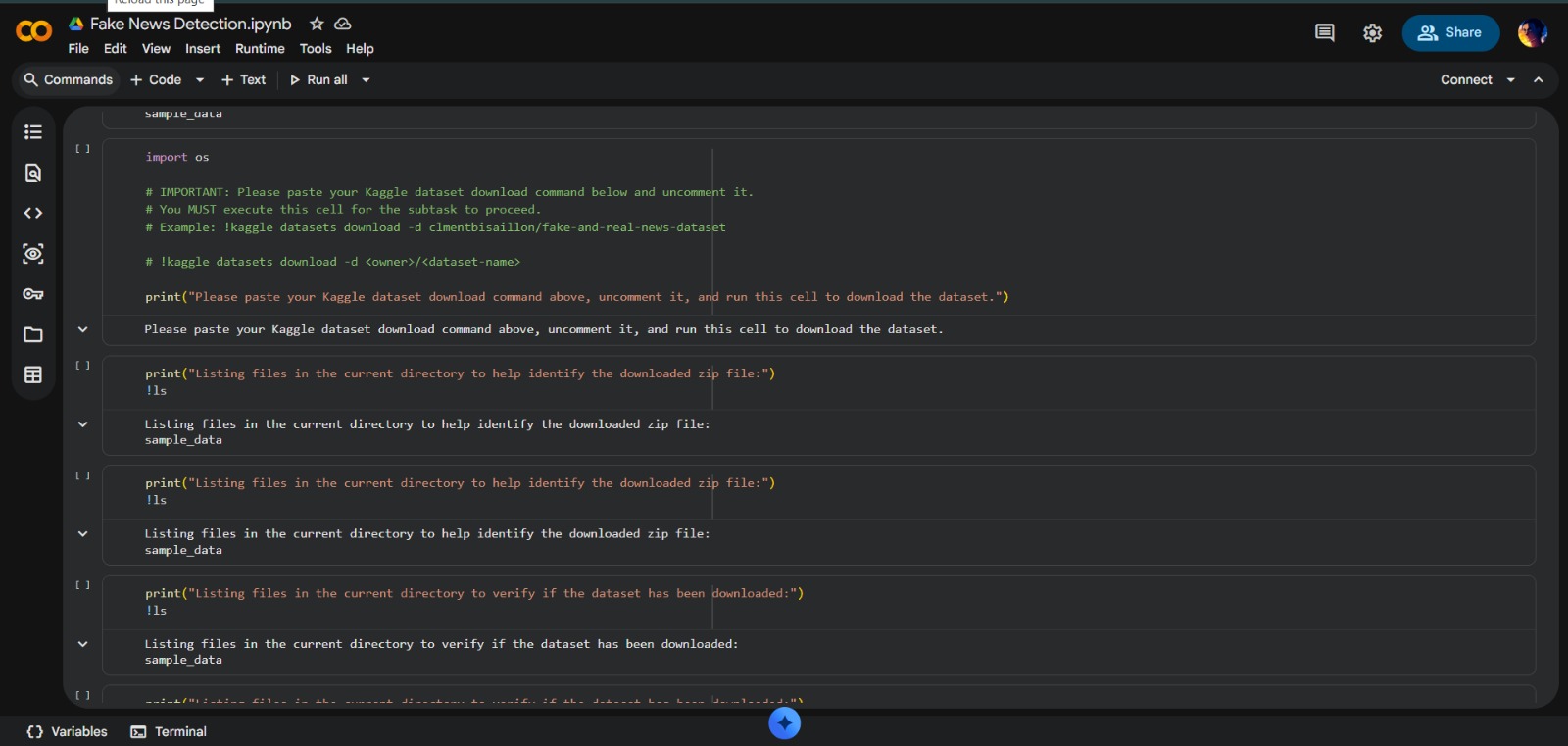

- Data Acquisition: Automating dataset retrieval via Kaggle API and web protocols

- Pipeline Engineering: Designing robust workflow for multi-source CSV files

- Data Sanitization: Implementing logic to filter empty datasets and handle corrupt files

- Exploratory Data Analysis (EDA): Structuring data using Pandas for insights

- Environment Configuration: Setting up Google Colab with secure API credentials

Project Highlights

- Self-Correcting Logic: Automated failure detection with manual intervention prompts

- Modular Code Structure: Maintainable and scalable notebook design

- High Performance: Optimized for Google Colab's virtual environment

- Professional Documentation: Integrated markdown cells guiding through ML lifecycle

- Scalable Architecture: Easy dataset swapping for different classification tasks